AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Meld windows requires python11/16/2023

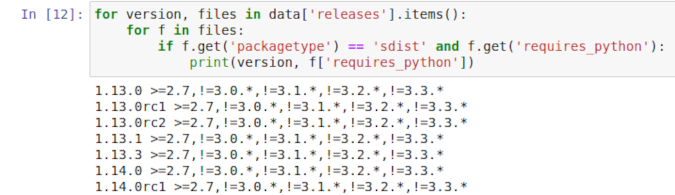

For more information about version updates, refer to Shut down and Update Studio Apps. If you don’t see Llama 2 models, update your SageMaker Studio version by shutting down and restarting. You can find two flagship Llama 2 models in the Foundation Models: Text Generation carousel. Once you’re on the SageMaker Studio, you can access SageMaker JumpStart, which contains pre-trained models, notebooks, and prebuilt solutions, under Prebuilt and automated solutions.įrom the SageMaker JumpStart landing page, you can browse for solutions, models, notebooks, and other resources. For more details on how to get started and set up SageMaker Studio, refer to Amazon SageMaker Studio. SageMaker Studio is an integrated development environment (IDE) that provides a single web-based visual interface where you can access purpose-built tools to perform all ML development steps, from preparing data to building, training, and deploying your ML models. In this section, we go over how to discover the models in SageMaker Studio. You can access the foundation models through SageMaker JumpStart in the SageMaker Studio UI and the SageMaker Python SDK. Llama 2 models are available today in Amazon SageMaker Studio in us-east 1, us-west 2, eu-west-1, and ap-southeast-1 Regions. The model is deployed in an AWS secure environment and under your VPC controls, helping ensure data security. You can now discover and deploy Llama 2 with a few clicks in Amazon SageMaker Studio or programmatically through the SageMaker Python SDK, enabling you to derive model performance and MLOps controls with SageMaker features such as Amazon SageMaker Pipelines, Amazon SageMaker Debugger, or container logs. ML practitioners can deploy foundation models to dedicated Amazon SageMaker instances from a network isolated environment and customize models using SageMaker for model training and deployment. With SageMaker JumpStart, ML practitioners can choose from a broad selection of publicly available foundation models. Regardless of which version of the model a developer uses, the responsible use guide from Meta can assist in guiding additional fine-tuning that may be necessary to customize and optimize the models with appropriate safety mitigations. The tuned models are intended for assistant-like chat, whereas pre-trained models can be adapted for a variety of natural language generation tasks. Llama 2 was pre-trained on 2 trillion tokens of data from publicly available sources.

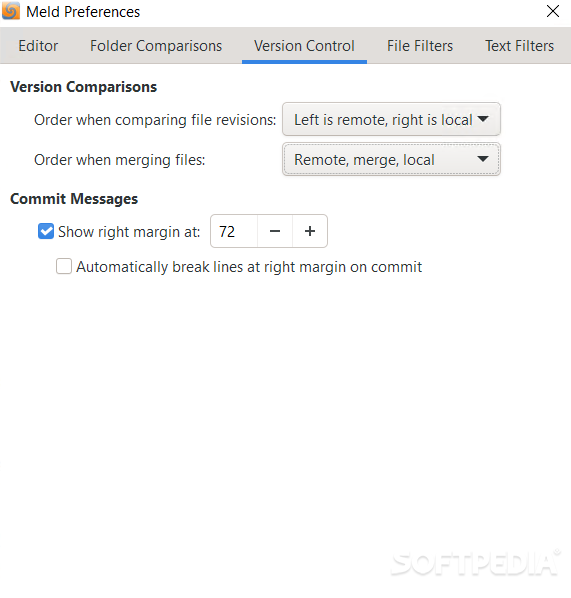

According to Meta, the tuned versions use supervised fine-tuning (SFT) and reinforcement learning with human feedback (RLHF) to align to human preferences for helpfulness and safety. It comes in a range of parameter sizes-7 billion, 13 billion, and 70 billion-as well as pre-trained and fine-tuned variations. Llama 2 is intended for commercial and research use in English. Llama 2 is an auto-regressive language model that uses an optimized transformer architecture. In this post, we walk through how to use Llama 2 models via SageMaker JumpStart. You can easily try out these models and use them with SageMaker JumpStart, which is a machine learning (ML) hub that provides access to algorithms, models, and ML solutions so you can quickly get started with ML. Fine-tuned LLMs, called Llama-2-chat, are optimized for dialogue use cases. The Llama 2 family of large language models (LLMs) is a collection of pre-trained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters. Edit your mercurial.Today, we are excited to announce that Llama 2 foundation models developed by Meta are available for customers through Amazon SageMaker JumpStart. Put the meld.bat file somewhere in your PATH environment variable.Ģ. In the batch file use the following command (change the path to the path of your Meld installation): C:\Python27\python.exe "C:\Program Files (x86)\meld-1.5.4\bin\meld" %* Once you have installed: Python, PyGTK All-in-one installer and Meld you can get it to work under Mercurial by:ġ. The notes for installing under Windows are here:

It is primarily a Linux tool but as its written in Python it will run under Windows with the right supporting tools. This makes it easier to understand where code has been added and removed. The differences expand or balloon out from comparison to comparison. It can be used instead of WinMerge or the built in Mercurial diff tool – KDiff3. Where it improves upon WinMerge and KDiff3 is the visualization of differences between files and versions. Meld is a nice diff and file comparison tool.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed